Content Removed by Direct Channels and FOA’s Volunteers

In 2025, FOA significantly increased content removals in 2025 compared to 2024, from 67% in 2025 compared to 53% in 2024, and 35% of volunteers report compared to 28% in 2024. Most of the content was monitored in English, Russian, French, Spanish, Arabic, and German.

This quantitative rise highlights the growing impact of FOA’s early intervention strategies and direct escalation channels with social media platforms. In 2025, FOA’s direct escalation channels consistently removed far more antisemitic content than individual reporting across every major platform,showing that trained intervention, not volume alone, is what stops online incitement from spreading.

The comparison between FOA’s professional removal percentage and standard volunteer (user) removal rates in 2025 shows that NGO reporting is significantly more effective than individual reporting of content across all platforms. In addition, FOA expanded its research and investigations into platform policies throughout 2025. These efforts enhanced our use of escalation channels, resulting in a significantly higher content removal rate and a total increase of 14% compared to previous years. On the one hand, this demonstrates the importance of FOA as a partner flagger by the platforms. On the other hand, it highlights the “preferential treatment” given to FOA as an NGO, where a report of hateful material made by a volunteer as an individual is less likely to be removed than a report made by the same volunteer through FOA.

On TikTok, FOA’s specialized escalation channels achieved an approximately 85% success rate, while standard volunteer reports reached roughly 35%. Similar trends were observed across FOA, which achieved a 62% removal rate, compared with 32% for volunteers who submitted complaints individually, and on YouTube, FOA achieved 60%, compared with roughly 22%. On X, professional reports were successful roughly 48% of the time, whereas volunteer reports were successful in 18% of cases.

In several cases, content initially rejected by platforms was successfully removed following persistent appeals, underscoring the importance of professional, evidence-based engagement.

Content Removed by FOA’s Direct Channels: 2024 vs. 2025

The chart illustrating removal rates across these social media platforms reveals progress on every monitored site between 2024 and 2025.

Monitored Content by Type of Antisemitism

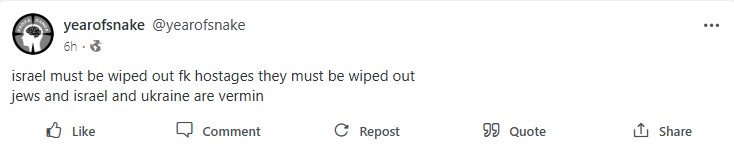

FOA’s monitoring data from 2025 shows not only a quantitative rise in antisemitic content, but a qualitative escalation. Calls for violence, glorification of terror, and direct threats became more explicit, particularly during periods of geopolitical tension.

Over the past year, these threats of violence have become a larger and more frequent part of the digital discourse.

In 2024, inciting material accounted for 13% of all monitored content. By 2025, that figure had climbed to over 17%. The volume of documented incitement increased by nearly a third in one year, indicating that the internet is becoming a more hostile and dangerous environment.

The 2025 distribution for monitored hate content—representing the average across major social media platforms including Meta, X, TikTok, and YouTube-shows that classic antisemitic narratives remain the primary category at 33%. This is followed by the incitement or glorification of violence at 22%. Both supporting terrorism and anti-Israeli/anti-Zionist content account for 15% of detected material each, while Holocaust denial and false or fake information represent 11% and 5% of the distribution, respectively.

This data illustrates that while traditional tropes remain dominant, explicit calls for violence represent an alarming and substantial portion of online hate speech.

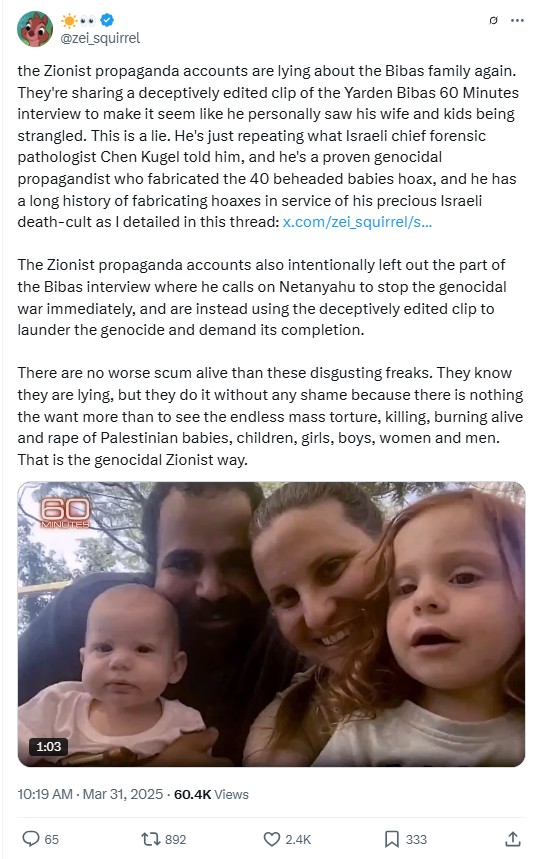

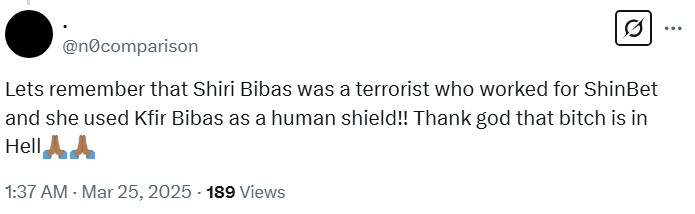

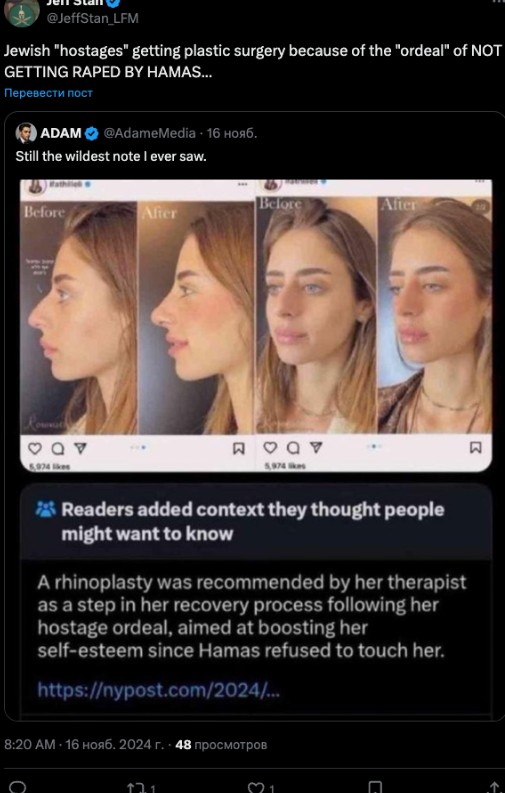

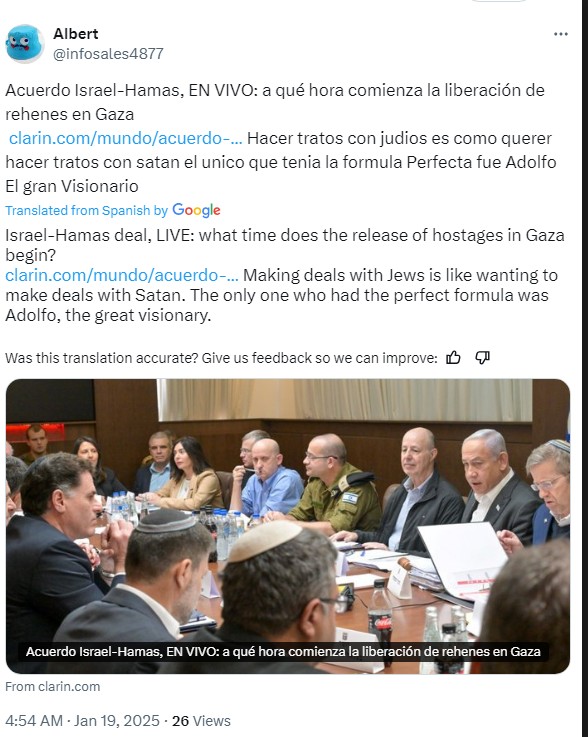

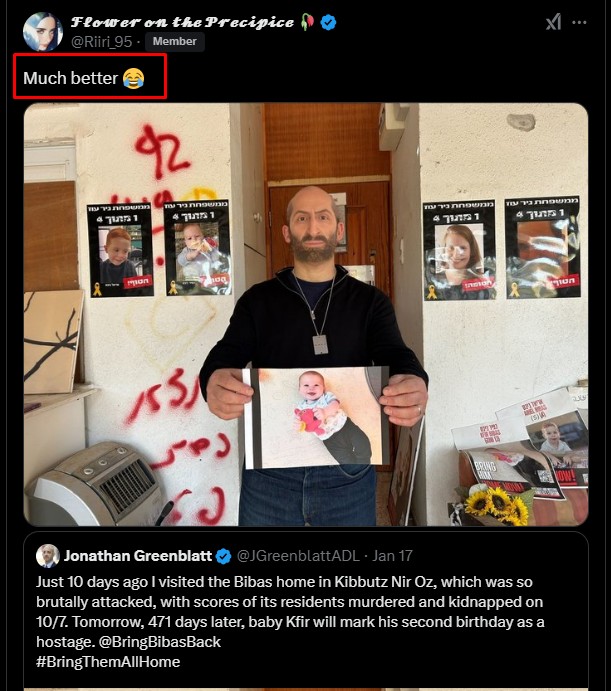

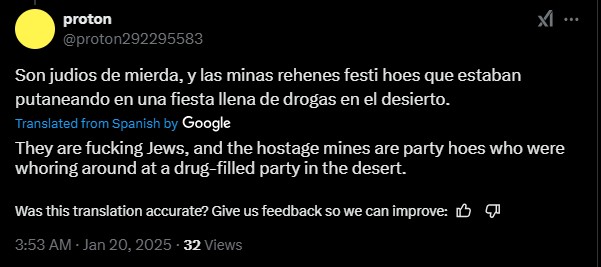

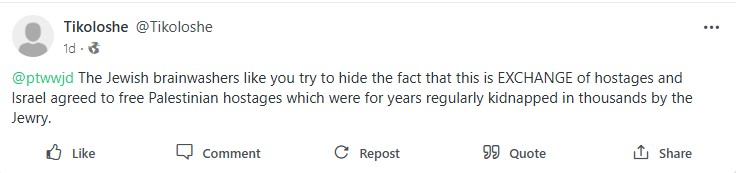

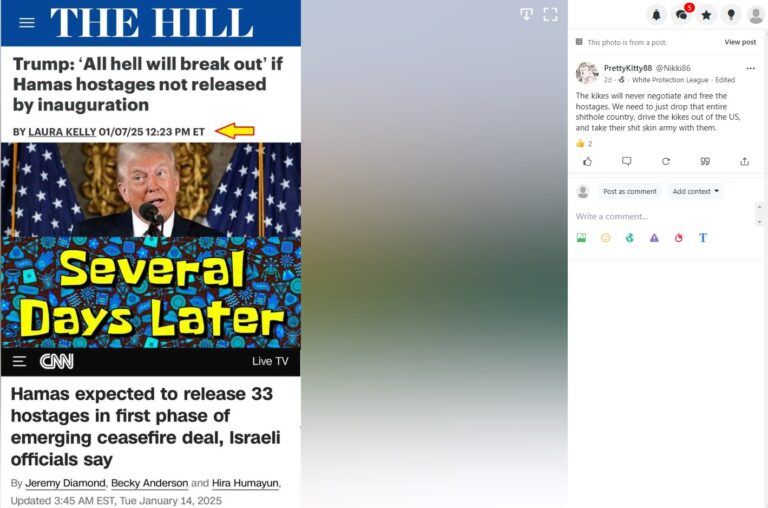

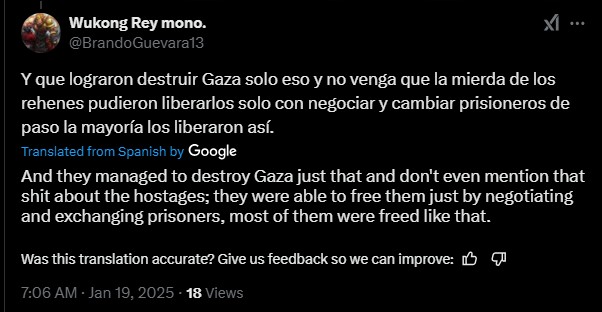

The murder of Shiri Bibas and her two young children, Ariel and Kfir, is one of the most horrifying incidents of Hamas’s cruelty. The confirmation that a mother and her babies were killed by their captors shocked people around the world. On social media, users shared content that went far beyond political debate. Posts and memes mocked the murdered children and even praised the violence. The suffering of an innocent family was stripped of humanity and turned into material for hatred and incitement.

View Gallery of Hostages Related Posts that were ALL Successfully Removed

Throughout 2025, FOA placed significant emphasis on locating and removing harmful comments, removing over 1,000 comments. The antisemitic comments are frequent under pro-Israeli content, they are also highly prevalent in the comment sections of biased news outlets such as the BBC and Al Jazeera. By focusing specifically on these discussion threads, FOA was able to target these comments more effectively. These comments were collected during October and December 2025. All these comments have since been removed.

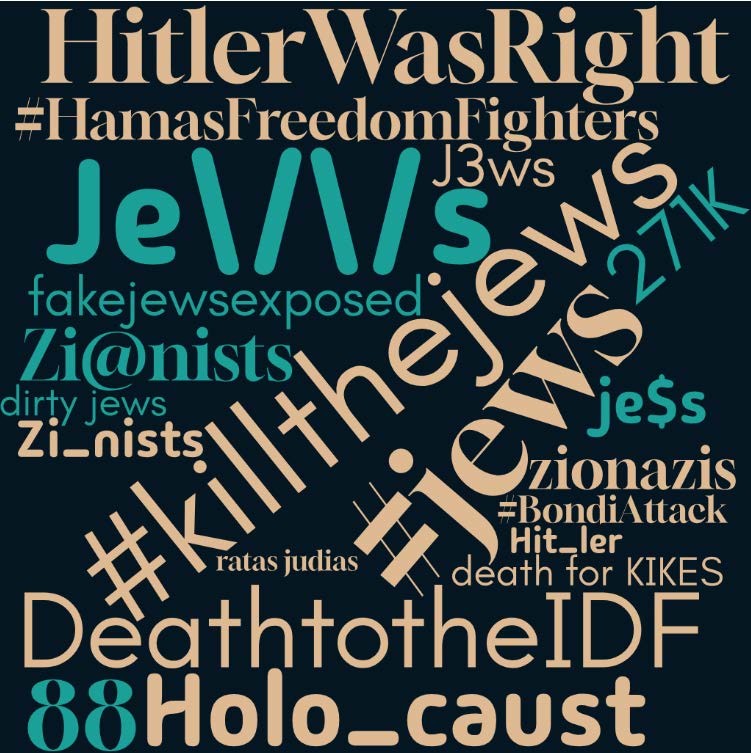

Top Hashtags and Keywords

FOA monitors and documents the hashtags and keywords used during their content searches and in the aggregated content. We compiled a list of the most frequently used hashtags and keywords.

A notable finding is the use of alternative spellings to evade social media platforms’ algorithmic moderation systems. For instance, terms like “J*ws” or “j€ws” are deliberately misspelled to evade detection, demonstrating the adaptive methods used to avoid automated moderation filters. For further insight, a compiled list of the Top 20 Hashtags is available below. The following hashtags reflect prevalent trends of content and keyword usage: